Why Your D2C Channel Misleads You on Demand

You spent the last five years building a direct to consumer channel. You optimized checkout flows. You compressed shipping times. You A/B tested button colors and email subject lines. You celebrated every uptick in conversion rate and average order value. And yet, you are still guessing about what to make next season. You are closer to consumers than ever before and further from understanding what they actually want to buy.

Here is the uncomfortable truth. D2C penetration grew from 14 percent to 24 percent of total retail sales between 2020 and 2025. Markdown rates stayed stubbornly stuck at 25 to 30 percent across most categories. You doubled your direct consumer touchpoints and got almost nothing back in terms of product selection accuracy. The problem is not your data. The problem is what you are using it for. You built D2C to sell more efficiently. You should have built it to learn more effectively. What you need is D2C demand intelligence, not just a better cash register.

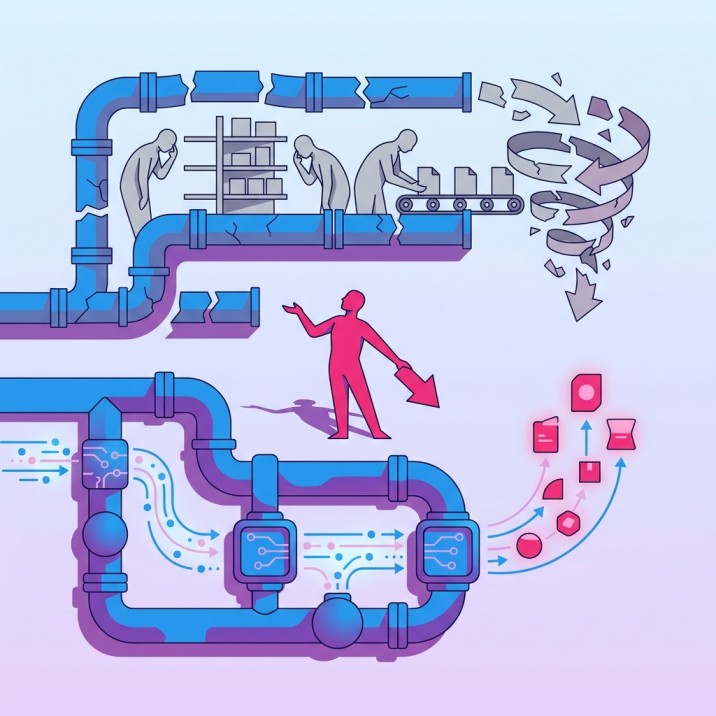

Most retailers treat their D2C channel like a better cash register. Faster transactions, cleaner attribution, higher margins per unit sold. What they miss is the strategic intelligence sitting in search behavior, browse patterns, and early demand signals. The data that tells you what consumers want before you commit to making it. Instead, you are using D2C data the same way you used wholesale sell-through reports. To understand what happened after you already made the wrong thing. Speed without direction is of no use. You have the speed. You have the proximity. You are still moving in the wrong direction because you are committing to products before validating demand.

Your D2C success metrics tell you how well you sell what you made. They do not tell you whether you should have made it in the first place. Conversion rate measures how many people bought from the assortment you offered. It does not measure how many people left because you did not offer what they wanted. Average order value tells you how much customers spent on available inventory. It does not tell you how much they would have spent if the right products existed. Repeat purchase rate shows loyalty to your brand. It does not show the silent churn of customers who tried you twice, found the assortment lacking, and never came back.

The Cost Of Proximity Without Prediction

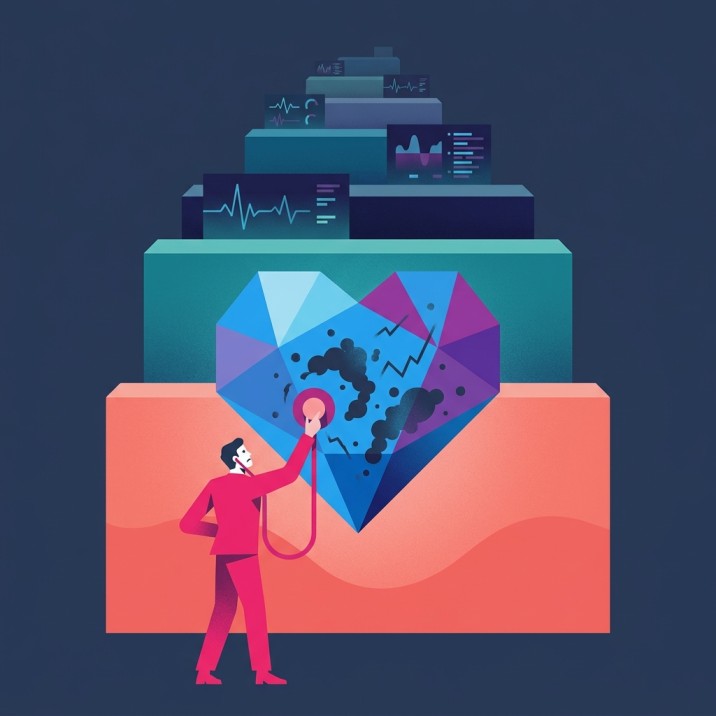

Early doctors had proximity to patients but limited diagnostic tools. They could see symptoms. They could not predict disease before it manifested. Modern medicine changed when continuous monitoring systems arrived. Heart rate variability detected cardiac events before chest pain. Glucose monitors flagged diabetic crises before fainting spells. The shift was from reactive diagnosis to predictive intelligence. Proximity plus prediction.

Retail is still in the proximity without prediction phase. D2C gives you access to the patient. You can see every click, every cart abandonment, every return reason code. But you are still diagnosing after symptoms appear. Slow sell-through is chest pain. Markdowns are the fainting spell. By the time you see these signals, the damage is done. Inventory is committed. Margin is lost. The assortment failure already happened six months ago when you greenlit production without validating demand.

A leading fashion retailer launched a D2C platform and celebrated 40 percent year-over-year growth in online revenue. Markdown rates in the same period increased from 22 percent to 28 percent. The channel was selling more. The company was learning nothing. They tracked cart conversion obsessively. They ignored search queries for styles they did not carry. They measured repeat purchase rates. They missed the customers who searched twice, found nothing, and never returned. Proximity without prediction. Revenue without intelligence.

The medical analogy holds because the cost structure is identical. Treating disease after it manifests is exponentially more expensive than preventing it. A cardiac event costs hundreds of thousands in emergency intervention. A glucose monitor costs hundreds and prevents the crisis entirely. Markdowns are your cardiac events. Pre-commitment demand signals are your glucose monitor. One destroys margin after the fact. The other protects it before commitment. You are still paying for emergency intervention when you should be investing in continuous monitoring.

Why Consumer Data Utilization Fails At The Product Decision Layer

You have more consumer data than any retail generation in history. Purchase history. Browsing behavior. Email engagement. Social media sentiment. App usage patterns. And none of it is answering the question that matters most. What should we make next?

The failure is not volume. The failure is application. Most consumer data utilization strategies optimize the wrong layer of decision making. You use data to personalize recommendations from existing inventory. To optimize pricing on products already made. To target promotions for styles already sitting in warehouses. All of this is downstream. The product is already wrong. You are just trying to sell it more efficiently to the wrong people at the wrong price.

A major sportswear brand built a sophisticated customer data platform that integrated purchase history, browsing behavior, and loyalty program activity. They used it to send personalized emails featuring products each customer was statistically likely to buy. Open rates improved. Click-through rates improved. Conversion rates on those emails improved. Markdown rates across the assortment stayed flat. They optimized distribution of the existing assortment. They did nothing to improve the assortment itself. The data never touched the product creation process. It only touched the product selling process.

The structural problem is timing. By the time a product exists in your system, it is too late to use consumer data to decide whether it should exist. You can use data to decide who sees it, when they see it, and what price they see. You cannot use data to decide whether making it was the right call in the first place. That decision happened months earlier, in a room with merchandising teams looking at inspiration boards and last season’s sell-through reports. No consumer data in sight.

This is why D2C proximity has not translated to better product decisions. The data arrives after the decision. You built a real-time feedback loop for selling. You still have a six-month lag for learning. The consumer tells you what they want through search behavior in March. You decide what to make in April based on last season’s sales. You launch the product in September. You discover in November that you were wrong. You markdown in December. The consumer told you in March. You listened in November. Eight months too late.

Markdown Reduction Strategy Requires Upstream Intervention

Markdown reduction strategy has been stuck in the same tactical loop for two decades. Faster inventory turns. Better demand forecasting. Dynamic pricing. Improved supply chain responsiveness. All of these treat markdowns as a distribution problem. Markdowns are a product problem. You are making the wrong things. Everything downstream is just damage control.

The math is unforgiving. A 25 percent markdown rate means you are destroying a quarter of your potential gross margin on products that should not have been made. Faster turns do not fix that. They just help you realize the loss sooner. Better forecasting does not fix that. It just gives you more accurate predictions of how badly the wrong product will sell. Dynamic pricing does not fix that. It just helps you find the price point where someone will finally buy the thing nobody wanted at full price.

A global home goods retailer implemented a markdown optimization engine that used machine learning to determine optimal discount timing and depth. Markdown dollars as a percentage of total sales dropped by two percentage points. The CFO celebrated. The Chief Merchant missed the real story. They were still marking down 23 percent of the assortment. The algorithm just helped them lose less money on products that should not exist. They optimized the symptom. They ignored the disease.

Real markdown reduction happens upstream. Before the purchase order. Before the factory commitment. Before the design is finalized. It happens when you validate demand signals before committing capital and inventory risk. This requires a different data architecture entirely. Not post-purchase transaction data. Pre-commitment demand signals. Search volume for attributes you do not carry. Browse behavior on competitor sites. Social listening for unmet needs. Engagement patterns on styles you test but have not produced.

A leading home improvement chain tested this approach on a single category. They monitored search queries on their site for product attributes they did not currently offer. Customers were searching for a specific finish type the assortment did not include. The search volume was significant. Persistent across weeks. The category team added the finish type to the next product development cycle. The resulting SKUs sold through at 94 percent full price. No markdowns. No guessing. They listened before committing, not after failing.

That is the shift. Markdown reduction strategy stops being about better ways to sell the wrong products. It starts being about not making the wrong products in the first place. The data exists. The search queries are sitting in your logs right now. The browse patterns are in your analytics. The gap is not data availability. The gap is connecting that data to the product decision before the commitment happens.

Building D2C Learning Systems That Predict Before You Commit

D2C learning systems are not the same as D2C analytics platforms. Analytics platforms tell you what happened. Learning systems tell you what to do next. Analytics platforms generate reports. Learning systems generate decisions. The difference is whether the output changes the product or just the marketing.

Most D2C platforms are built for transaction optimization. They track the customer journey from awareness to purchase. They measure funnel conversion at each stage. They identify drop-off points and optimize them. All of this assumes the product is correct and the job is getting more people to buy it. A learning system assumes the opposite. The job is figuring out whether the product should exist before you make it.

The architecture is different. A transaction-focused system integrates point-of-sale data, web analytics, email engagement, and ad performance. A learning system integrates search behavior, browse patterns without purchase, competitor assortment monitoring, and social listening for unmet needs. One measures success. The other predicts failure before it happens.

A major auto parts retailer built a learning system around search behavior on their D2C platform. They tracked not just what customers bought, but what they searched for and did not find. They categorized these searches by part type, vehicle compatibility, and price range. They shared this data with their product team monthly. The product team used it to identify assortment gaps and prioritize new SKU additions. Within two product cycles, their search-to-purchase conversion rate improved by 18 percentage points. Not because they optimized the search experience. Because they started carrying what people were searching for.

That is a learning system. The data changes what you make, not just how you sell it. The feedback loop runs from consumer signal to product decision, not from consumer signal to marketing tactic. The system learns what the market wants and feeds that learning into the creation process before commitment.

Predictive assortment planning is the output of a learning system. You stop planning assortments based on what sold last season. You start planning based on what consumers are signaling demand for right now. You monitor search trends. You track browse behavior on styles you have not produced. You test concepts digitally before committing to production. You validate demand before you validate the purchase order.

This does not require a complete technology overhaul. It requires connecting data you already have to decisions you are already making. Your search logs contain demand signals. Your product team is not seeing them. Your browse analytics show interest in attributes you do not carry. Your merchants are not using that to guide line planning. The data exists. The decision process has not adapted to use it.

Retail Diagnostic Intelligence As Competitive Advantage

Retail diagnostic intelligence is the ability to diagnose assortment failures before they manifest as markdowns. It is the glucose monitor, not the emergency room. It is the early warning system that prevents the crisis instead of managing it after the fact.

The competitive advantage is not better data. Everyone has data. The advantage is using data to make fewer wrong products instead of selling wrong products more efficiently. A retailer with 15 percent markdown rates and average D2C analytics beats a retailer with 28 percent markdown rates and sophisticated D2C analytics every time. Margin is not destroyed in the marketing funnel. It is destroyed in the product decision.

A leading fast fashion retailer reduced markdown rates from 26 percent to 16 percent over three product cycles by implementing pre-commitment demand validation. They did not improve their supply chain. They did not hire better designers. They stopped greenlighting products until search and browse data validated consumer interest. They tested styles digitally before producing them physically. They monitored engagement and only moved forward on designs that showed strong pre-commitment signals. The result was not just lower markdowns. It was higher full-price sell-through, stronger margins, and less working capital tied up in inventory that would eventually be discounted.

That is diagnostic intelligence. The system predicts which products will fail before you commit capital to making them. It does not predict with perfect accuracy. It does not need to. It just needs to be better than gut instinct and last season’s sales data. A 60 percent accuracy rate on predicting product failures before commitment is transformational compared to discovering failures after production.

The retailers who win the next decade will not be the ones with the most D2C revenue. They will be the ones who turned D2C into a learning system that makes better products. Revenue is a lagging indicator. Product selection accuracy is a leading indicator. You can grow revenue by discounting bad products. You cannot build a sustainable business that way. You can grow revenue by making products consumers actually want at prices they are willing to pay. That requires knowing what they want before you make it.

Transforming D2C From Sales Channel To Intelligence System

The transformation from sales channel to intelligence system is not a technology project. It is a decision-making process redesign. The question is not what tools you buy. The question is whether demand signals reach product decisions before commitments are made.

Most organizations have the data. They do not have the process. Search data sits in the analytics team. Product decisions happen in the merchandising team. The two do not talk until after the product launches and the analytics team is asked to explain why it is not selling. By then, the learning is retrospective. The product is made. The inventory is committed. The only question left is how much margin you will destroy trying to move it.

The process redesign is simple in concept. Before any product is greenlit for production, it must pass through a demand validation gate. That gate uses pre-commitment demand signals. Search volume for the attributes the product contains. Browse engagement on similar styles from competitors. Social listening for consumer interest in the category. Digital concept testing to gauge interest before physical production. If the signals are weak, the product does not move forward. If the signals are strong, you commit.

This is not about killing creativity. It is about killing guesses. Designers still design. Merchants still curate. The difference is they validate demand before committing capital. A designer creates ten concepts. The validation process identifies which three have the strongest pre-commitment signals. Those three get produced. The other seven get shelved or reworked. You still made great design. You just did not make great design that nobody wants to buy.

A major sportswear brand implemented this process for a new footwear line. Designers created fifteen colorway concepts. The brand tested all fifteen digitally through social ads and on-site browse behavior. Three colorways generated 70 percent of the engagement. The brand produced those three in depth and the remaining twelve in limited test quantities. The three high-signal colorways sold through at 96 percent full price. The twelve low-signal colorways averaged 68 percent sell-through and required markdowns. The brand learned which signals predict success. Next season, they only produced high-signal colorways. Markdown rates dropped. Margins improved. The design team was not constrained. They were just guided by what consumers actually wanted.

That is the shift. D2C stops being a channel where you sell what you made. It becomes the system that tells you what to make. The intelligence flows upstream, not just downstream. You still optimize conversion rates and average order values. But you do it on an assortment that was validated before production, not justified after markdowns.

From D2C Metrics To D2C Demand Intelligence

The metrics you track define the business you build. If you only measure conversion rate, average order value, and customer acquisition cost, you will only optimize selling. If you measure search-to-assortment match rate, pre-commitment signal strength, and demand validation accuracy, you will optimize making.

D2C demand intelligence requires different metrics. Not instead of transaction metrics. In addition to them. You still need to know conversion rates. You also need to know what percentage of searches on your site return zero results because you do not carry what consumers want. You still need to know average order value. You also need to know how many high-intent browsers leave without purchasing because the assortment does not match their needs. You still need to know repeat purchase rates. You also need to know how many customers tried you once, found the selection lacking, and never returned.

These are diagnostic metrics. They tell you where the product is failing before the failure shows up in markdowns. A 15 percent zero-result search rate means 15 percent of your traffic is telling you exactly what they want and you are not listening. A 40 percent high-intent browse abandonment rate means nearly half of your most engaged visitors are leaving because the assortment does not match their needs. A 30 percent one-time purchase rate means a third of your customers are not coming back, and product selection is likely a major reason why.

A global home goods retailer started tracking zero-result search rates by category. They discovered that 22 percent of searches in one category returned no results. Customers were searching for a specific material type the assortment did not include. The category team added that material type to the next line plan. Zero-result search rates in that category dropped to 8 percent. Conversion rates improved. Repeat purchase rates improved. The metric diagnosed the problem. The product decision fixed it.

This is what D2C demand intelligence looks like in practice. The channel generates signals. The signals inform product decisions. The product decisions reduce assortment failures. The result is higher full-price sell-through, lower markdowns, and better capital efficiency. You are not just selling more. You are making better.

CONCLUSION

You built a direct to consumer channel to get closer to your customer. You succeeded. You are closer than ever. But proximity without prediction is just expensive access to your own failures. D2C demand intelligence is not about better analytics on what you sold. It is about better decisions on what to make. The retailers who win are the ones who stop using D2C data to optimize selling and start using it to eliminate guessing. Your channel is not dumb. Your decision process is. Fix the process, and the data you already have will tell you exactly what to make next.

Orbix Trends, Orbix Assort, Orbix Sense, and Orbix Price work together as the operating system of intelligence from create to curate. They connect pre-commitment demand signals to product decisions before capital is committed and margin is destroyed. If your team wants to see what this looks like for your specific category, start with a conversation at https://www.stylumia.ai/get-a-demo/

KEY TAKEAWAYS

D2C proximity without prediction just gives you expensive access to your own product failures after the damage is done.

Markdown rates stayed flat while D2C penetration doubled because retailers optimized selling, not making.

Consumer data utilization fails at the product decision layer because the data arrives after the commitment, not before.

Real markdown reduction happens upstream when you validate demand signals before greenlighting production, not after inventory lands.

D2C learning systems predict assortment failures before commitment by connecting search behavior and browse patterns to product decisions.

Retail diagnostic intelligence is the competitive advantage, diagnosing what will fail before you make it instead of after you markdown it.

The transformation from sales channel to intelligence system is a decision process redesign, not a technology upgrade.

FREQUENTLY ASKED QUESTIONS

Q1: What is D2C demand intelligence and why does it matter more than D2C sales growth?

D2C demand intelligence is the ability to use direct consumer signals to predict what products will succeed before you commit to making them. It matters more than sales growth because revenue from discounted wrong products destroys margin while revenue from validated right products builds it. You can grow sales by marking down bad assortments. You cannot build a sustainable business that way. Intelligence determines what you make. Sales just measure how well you move it.

Q2: Why do higher D2C penetration rates not automatically improve product selection accuracy?

Because most retailers use D2C data to optimize downstream selling, not upstream making. The data tells you who bought what and how to sell more of it. It does not tell you whether you should have made it in the first place unless you specifically architect systems to capture pre-commitment demand signals like search behavior for products you do not carry and browse patterns that do not convert. Proximity to the customer means nothing if the feedback loop does not reach product decisions before commitment.

Q3: How do you measure whether your D2C channel is actually learning or just selling?

Track diagnostic metrics, not just transaction metrics. Zero-result search rates tell you what consumers want that you do not carry. High-intent browse abandonment rates show how many engaged visitors leave because the assortment does not match their needs. One-time purchase rates reveal customers who tried you and did not return, often because product selection disappointed. If these metrics are not improving while your D2C revenue grows, you are selling better but learning nothing.

Q4: What is the difference between demand forecasting and pre-commitment demand signals?

Demand forecasting predicts how much of a product you already decided to make will sell. Pre-commitment demand signals tell you whether you should make the product at all. Forecasting happens after the product decision. Demand signals happen before it. Forecasting optimizes inventory depth on existing SKUs. Demand signals validate whether the SKU should exist. One reduces overstock. The other prevents wrong stock.

Q5: Can small retailers implement D2C learning systems or is this only for large organizations?

Small retailers have an advantage because they have fewer organizational silos between data and decisions. You do not need enterprise platforms. You need a process where search logs, browse behavior, and competitor assortment monitoring inform product decisions before you commit to production. A small retailer can review search queries manually every week and use that to guide buying. A large retailer needs automated systems to do the same thing. The principle is identical. Validate demand before committing capital.

Q6: How does predictive assortment planning reduce markdowns more effectively than dynamic pricing?

Predictive assortment planning prevents you from making products that will require markdowns. Dynamic pricing just helps you lose less margin on products that should not exist. One is upstream intervention. The other is downstream damage control. A product validated by pre-commitment demand signals sells through at full price because consumers already told you they wanted it. A product that failed validation and got made anyway will require discounting no matter how sophisticated your pricing algorithm is.

Q7: What is the first step to transform D2C from a sales channel into a learning system?

Connect search data to product decisions. Start tracking what consumers search for on your site that returns zero results. Categorize those searches by product type, attribute, and frequency. Share that data with your product and merchandising teams monthly. Use it to identify assortment gaps and prioritize new product additions. This single change creates a feedback loop from consumer signal to product decision. Everything else builds from there.